Key Takeaways

- LLM visibility tools vary widely in prompt scale, platform coverage, and localization.

- Brand mentions are not enough to measure real AI authority.

- Citation share shows trust better than simple mention counts.

- AI answers change by market, platform, and prompt wording.

- Enterprise teams need connected reporting across AI, organic, and paid search.

The Problem Most Enterprise Teams Discover Too Late

When a buyer asks ChatGPT “what are the best enterprise search intelligence platforms,” the AI doesn’t return a ranked list from Google. It synthesizes an answer from the sources it trusts most and your brand is either in that answer or it isn’t.

That gap between how a brand performs in traditional search and how it appears inside AI-generated answers is now measurable. A growing category of software has emerged specifically to track it: LLM visibility trackers.

But the category has matured unevenly. Tools that launched 18 months ago to serve agencies and mid-market companies are now being evaluated by enterprise teams with requirements those tools weren’t designed to meet. The result is a market that looks more uniform than it is and evaluation processes that miss the questions that actually matter at scale.

This guide is for marketing and search leaders beginning that evaluation. It covers how the tools work, how the market is structured, what separates adequate tools from genuinely useful ones, and the questions worth asking before any vendor conversation.

How LLM Visibility Tracking Tools Work

All LLM visibility trackers follow the same basic architecture. Understanding it helps in evaluating where tools differ.

Step 1 – Prompt library construction.

The tool maintains a library of industry-relevant questions and prompts, the kinds of queries real buyers ask AI systems. For a search intelligence company, this might include prompts like “what platforms track brand visibility in AI search” or “which tools monitor citations in ChatGPT.” The size and quality of this prompt library is one of the most consequential differences between tools, and one of the least prominently advertised.

Step 2 – Query execution across AI platforms.

The tool sends those prompts to AI platforms such as ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, and captures the full responses. This happens at scale, continuously, so that changes in AI answers over time are tracked rather than snapshotted.

Step 3 – Response parsing and analysis.

The tool analyzes each AI response for brand mentions, citation sources, position within the answer, sentiment, and competitive presence. This is where the sophistication gap between tools becomes most visible: some tools detect mentions; others understand context, tone, and source attribution. GrowByData’s LLM Intelligence, for example, distinguishes between list mentions, narrative citations, and primary source references, a distinction most tools don’t make.

Step 4 – Competitive benchmarking and reporting.

The tool surfaces findings as comparative data, your brand’s AI presence against named competitors, across platforms, over time.

How the Market Is Structured: Three Tiers

The LLM visibility tracking market in 2026 has consolidated around three distinct categories. They are not interchangeable.

Tier 1

SEO Platforms with LLM Modules

Examples: SEMrush, SE Ranking

Established SEO platforms have added AI visibility tracking to existing suites. The appeal is consolidation — organic rankings, keyword data, site audits, and LLM tracking under one roof.

Accessible entry point for current users. Broad keyword and organic data alongside AI mentions. Easier stakeholder buy-in.

LLM tracking is an add-on, not a core product. Smaller prompt libraries, binary sentiment, limited geographic coverage. Rarely meet the enterprise bar.

Tier 2

Standalone AI Monitoring Tools

Examples: Profound, Otterly

Built specifically for LLM monitoring. Dedicated prompt testing, competitive benchmarking, and more focused AI visibility reporting — a meaningful step forward from bolt-on modules.

Faster iteration on AI-specific features. More deliberate thinking around citation analysis and prompt research. Accessible mid-market pricing.

Designed for agencies and growth-stage companies. Multi-market tracking, large competitive sets, and integration with organic/paid data require workarounds. Track AI visibility in isolation.

Tier 3

Enterprise Search & LLM Intelligence Platforms

Built for enterprise scale across search and AI

A smaller category built to operate at enterprise scale across both Total search and AI visibility. These platforms track thousands of prompts across markets, languages, and devices, not a fixed seed list. They unify LLM intelligence with organic, paid, and SERP feature tracking, because at enterprise scale those signals don’t exist in isolation.

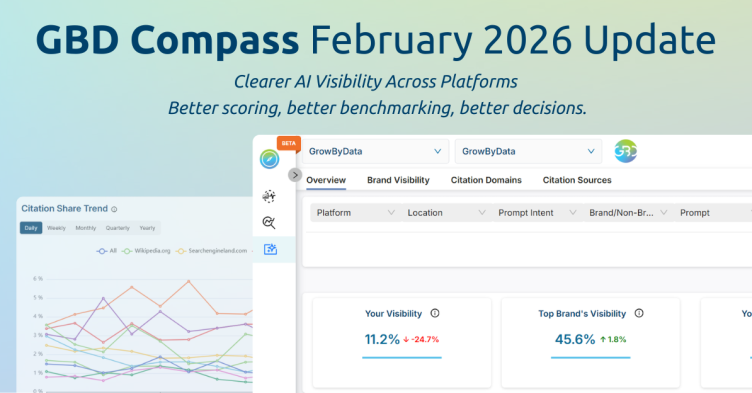

GrowByData’s LLM Intelligence sits in this tier. It monitors brand visibility, attributed citation share, and sentiment across ChatGPT, Perplexity, Gemini, Google AI Overviews, and Google AI Mode — integrated with GrowByData’s broader Search Intelligence platform so AI, organic, and paid data live in one view rather than separate dashboards.

Volume and granularity matched to enterprise needs. Cross-channel integration (AI alongside organic, shopping, SERP features). Multi-market & multi-language built in. Analyst support that converts data into decisions.

Higher investment. More complex onboarding. Built for teams with existing search intelligence maturity.

The Comparison: What to Evaluate Across Tiers

The table below maps the criteria that matter most at enterprise scale against what each tier typically offers. Individual tools within each tier vary, use this as a framework for structuring vendor questions, not a definitive scorecard.

Five Questions to Ask in Every Vendor Conversation

Most vendor demonstrations lead with dashboards. These five questions get beneath the interface to the data architecture that actually determines whether a tool is useful.

1. How large is your prompt library, and how is it constructed?

A tool testing 200 prompts produces fundamentally different data than one testing 20,000. More importantly: how are the prompts built? Tools that use only seed keywords produce narrow coverage. Tools that model natural language variation, the way real buyers actually phrase questions across different contexts, produce data that reflects actual AI behavior.

2. How do you handle prompt variation?

“Best LLM visibility tools,” “top tools for tracking AI brand visibility,” and “which software monitors citations in ChatGPT” are semantically similar but produce different AI answers. Ask how the tool accounts for phrasing variation. A tool that only tracks exact prompts will systematically undercount your visibility.

3. What exactly is tracked when my brand is “mentioned”?

There is a meaningful difference between a brand appearing in a list and a brand being cited as a primary source. Ask whether the tool distinguishes between mention types, tracks position within the response, and identifies the source domains the AI is drawing from. Platforms like GrowByData’s track what they call attributed citation share, separating passing list mentions from primary source citations, which carry meaningfully different strategic weight. Many tools do not make this distinction at all.

4. How are markets outside our primary region handled?

AI answers differ by geography. A brand that appears prominently in US AI responses may be largely absent in UK, German, or even region, even for the same prompts. Ask specifically how multi-market tracking works, whether it’s included in the base plan, and whether the tool accounts for local language variants.

5. How does this data connect to our existing search reporting?

AI visibility data in isolation is interesting. AI visibility data alongside organic performance, paid share of voice, and SERP feature tracking is strategic. Ask whether the platform integrates or requires a separate workflow to connect LLM data with traditional search metrics. GrowByData unifies all three in a single dashboard precisely because enterprise teams found the siloed reporting model unworkable at scale.

The Metric That Most Tools Get Wrong: Citation Share vs. Mention

- Brand mention count shows visibility. It measures how often your brand appears in AI-generated answers.

- Mentions can be misleading. A brand may appear in a list or comparison without being treated as an authoritative source.

- Citation share shows trust. It measures how often your brand is referenced as a primary or authoritative source.

- The difference matters. Mentions show presence, while citation share shows whether AI platforms rely on your brand.

- Enterprise teams should prioritize citation share. It is more useful for understanding authority, influence, and long-term AI visibility.

What Enterprise Teams Need That Most Tools Don’t Offer

Most LLM visibility tools were designed for a mid-market buyer: a digital marketing manager at a direct-to-consumer brand, or an agency handling a portfolio of accounts in one market. That buyer’s requirements are genuine but they are not enterprise requirements.

Enterprise teams consistently run into four limitations with tools that weren’t built for their context:

- Data volume. An enterprise brand may have hundreds of product lines, dozens of market segments, and thousands of relevant prompts. Tools designed around a fixed prompt library of a few hundred queries don’t scale to that surface area.

- Geographic depth. AI behavior is local. The same prompt about a global brand produces meaningfully different answers in different markets. Enterprise brands operating across regions need market-level data, not a single global view.

- Competitive set size. Enterprise teams typically track ten or more competitors, not two or three. Tools that limit competitive tracking either by count or by the granularity of competitive data force teams to make decisions with an incomplete picture.

- Integration requirements. Enterprise search teams don’t want a new dashboard, they want LLM data flowing into the reporting frameworks they already use. That requires API access, data exports, and in some cases direct integration with analytics platforms.

GrowByData was built with these constraints as the starting point, not retrofitted to meet them. Prompt scale, geographic depth, competitive set size, and integration with paid and organic search data are core to the platform rather than add-on tiers.

How GBD Compass Unifies Search & AI Visibility

GBD Compass unifies LLM visibility, Google AI Overviews, SEO, SEM, Google Shopping, and SERP feature so teams can analyze AI performance alongside traditional search visibility instead of managing separate reporting systems.

How to Build the Business Case Internally

For directors and VPs beginning to socialize LLM visibility investment upward, the framing that typically resonates with finance and executive stakeholders is: AI search is already influencing pipeline, whether or not we’re measuring it.

The case has three components:

- Exposure: How many of your highest-value queries are now answered by AI before the user clicks a result? In many commercial categories, AI Overviews and AI Mode now appear in a significant share of navigational and comparison searches. If those answers don’t include your brand or include it unfavorably, the revenue impact is real, even if it doesn’t yet appear in your click and conversion data.

- Competitor activity: Are your direct competitors already visible in AI answers where you are not? A basic audit, manually prompting ChatGPT, Perplexity, and Google AI Mode with the questions your buyers ask often produces enough competitive signal to justify a more structured tracking investment.

- Baseline cost: Most enterprise LLM visibility platforms can run a baseline audit before a full contract. GrowByData, for example, can scope a baseline AI search visibility audit across a defined set of categories and competitors limiting initial investment while producing the data needed to build the full business case internally.

The Bottom Line

The LLM visibility tracking market is real, growing, and genuinely uneven. Tools that look similar at the demo stage differ significantly in prompt scale, geographic coverage, sentiment depth, and integration capability, differences that become apparent only after a team has been using the platform for a quarter and starts asking harder questions of the data.

For enterprise teams, the evaluation is worth doing carefully. The right tool is one that matches the actual scale of your brand’s AI visibility challenge, not the scale of the most accessible pricing tier.

If you’re in the early stages of building a business case or want to understand how your brand currently appears across AI platforms before any vendor evaluation, GrowByData’s team can run a baseline AI visibility audit across your key categories and competitive set. Learn more about LLM Intelligence →

Frequently Asked Questions

What is an LLM visibility tracking tool?

An LLM visibility tracking tool is software that monitors how a brand appears in AI-generated answers across platforms like ChatGPT, Perplexity, Google AI Overviews, and Gemini. It tracks whether the brand is mentioned, how it is described, which source domains the AI draws from, and how presence compares to competitors.

How is LLM visibility different from traditional SEO rankings?

Traditional SEO measures where a page appears in a list of search results. LLM visibility tracks whether and how AI systems incorporate a brand into synthesized answers. A brand can rank on page one of Google while being largely absent from AI-generated responses because AI answer engines weight citation authority and content quality differently than ranking algorithms do.

Which AI platforms should enterprise teams track?

At minimum: ChatGPT, Perplexity, Google AI Overviews, and Google AI Mode. Brands operating globally should also include Gemini and track market-specific AI behavior. The most important platforms to prioritize are the ones your specific buyers use when researching purchase decisions which varies by industry and buyer persona.

How often do AI visibility results change?

Frequently. Major AI platforms update underlying models and retrieval logic regularly, and the web sources they draw from shift continuously. In competitive categories, AI representations of brands can shift meaningfully week over week. Continuous tracking is more strategically valuable than periodic snapshots, particularly for brands making active investments in content and citation building.

What is citation share and why does it matter more than mention count?

Citation share measures how often a brand is referenced as a trusted or primary source in AI-generated answers, relative to competitors. It is a more meaningful metric than mention count because it reflects whether AI systems have learned to treat a brand as authoritative not just whether it appeared in a list. GrowByData tracks this as attributed citation share, distinguishing it from passing mentions. High citation share compounds over time and correlates more directly with commercial outcomes than raw mention volume.

What makes GrowByData’s LLM Intelligence different from other tools in the market?

GrowByData sits in the enterprise platform tier. It tracks AI visibility across thousands of real-world prompts, not a fixed seed list across ChatGPT, Perplexity, Gemini, Google AI Overviews, and Google AI Mode, with multi-market and multi-language coverage built in. Unlike Tier 1 and Tier 2 tools, it tracks attributed citation share (not just mention counts), breaks sentiment down by product attribute and topic, distinguishes above-the-fold from below-the-fold AI presence, and integrates LLM data with organic, paid, and SERP feature tracking in a single view.